AI is changing how we live and work. But as AI gets smarter, we need to be careful with how we make, use, and control it. Knowing about Responsible AI is key to making sure tech grows with our values. It’s not just about what AI can do, but what it should do. Responsible AI focuses on making things fair, clear, and accountable over time.

As companies use AI more for making choices, giving advice, and looking at data, people are seeing the risks. This piece looks at what Responsible AI is, why we need it, and how it’s used in different areas and places.

The Ethical Foundation of Responsible AI

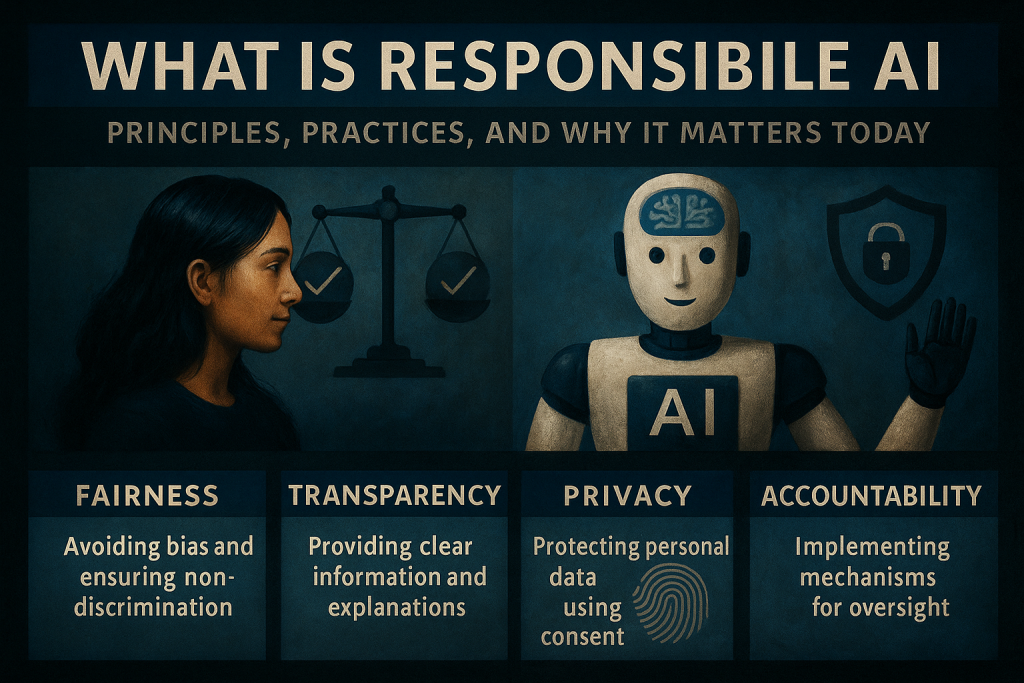

To grasp Responsible AI, start with ethics. Responsible AI rests on core ethics, making sure algorithms help society.

Fairness and Non-Discrimination

AI bias is a known problem. A hiring algorithm might favor certain groups. A facial recognition system could have racial errors. Fairness is key. Responsible AI tries to remove bias and ensure equity.

Accountability

AI can’t just operate on its own. People need to take charge of what it does. Developers, businesses, and institutions must keep an eye on AI, change it when needed, and make sure it works right.

Transparency and Explainability

Hidden systems pose risks, especially in money matters, health, or law. We need clear AI. People must know how choices happen and question them if needed.

Core Principles of Responsible AI

Moving from ethics to practice, what is Responsible AI in terms of principles? These are the actionable pillars:

Human Oversight and Control

People should guide AI choices. We need humans to check, understand, and sometimes change what AI decides.

Privacy and Data Governance

AI must not misuse data. Ethical AI collects data fairly, keeps it anonymous when needed, and guards it against leaks. Consent and openness are crucial.

Safety and Reliability

AI systems must work well under set rules. They need backup plans to stay safe. This helps users trust them more. Trust is key when AI runs important things or makes big choices in life.

Why Responsible AI Matters

AI is now everywhere. It helps doctors find illnesses, drives cars, predicts money trends, and even helps in hiring and law. Knowing Responsible AI means seeing how tech touches our lives.

- Social Impact

AI without rules can make biases worse, invade privacy, or take jobs. Good AI should help, not harm. - Regulatory Pressure

Laws like the EU’s AI Act push for ethical AI. Ignoring this can lead to legal and financial trouble. - Brand Trust and User Adoption

Laws like the EU’s AI Act push for ethical AI. Ignoring this can lead to legal and financial trouble.

How Responsible AI Supports Human Rights

- What is Responsible AI AI can help fairness if it’s built right. It supports justice and equity by respecting human dignity.

- Avoiding Bias and Injustice

Responsible AI works to remove bias. It stops unfair decisions based on race, gender, disability, or income. - Promoting Digital Equality

Responsible AI makes sure everyone gets equal access to tech benefits. This includes tools for health checks, learning, and money services. - Safeguarding Freedom of Expression

AI that is well-designed values free speech. It welcomes different views. This is key on platforms that suggest or control content.

Responsible AI in Practice – Industry Examples

To grasp what is Responsible AI, we must examine real-world implementations:

- Healthcare

AI that is responsible keeps patient data private. It also stops diagnostic bias. It does this by using varied datasets for training. - Finance

It cuts risk in credit scoring. It flags unfair patterns. It ensures fair loan checks. - Law Enforcement

AI tools, like those in predictive policing or facial recognition, need strict ethical rules. This stops profiling and misuse. - Education

AI helps make learning fair. It adapts to students’ needs and makes sure everyone gets a chance to succeed.

Corporate Responsibility in AI Development

Companies play a critical role in shaping Responsible AI:

- Ethics Committees

Internal boards check AI projects’ effects before they start. These groups have ethicists, lawyers, and various stakeholders. - Bias Audits

Regular checks assess systems for bias. This helps match product results with values and rules. - Community Feedback

Leading firms talk to locals early. They learn concerns and adjust plans.

Responsible AI vs. Ethical AI – What’s the Difference?

Ethical AI looks at what should be done. It’s about principles and intent. Responsible AI deals with actions. It’s about what organizations do to ensure safety, fairness, and accountability. Both together create a full plan for ethical tech.

The Role of Governments and Policymakers

Legislation is key to reinforcing Responsible AI practices:

- Global AI Policies

Governments make AI laws, set up ethics groups, and work together worldwide to guide AI growth. - Public-Private Collaboration

Tech firms and governments can work together. This teamwork helps use Responsible AI faster. They share rules to guide this process. - Funding and Incentives

Public money goes to research that uses AI for good, like climate models or tools for access.

How Startups Can Build Responsible AI From Day One

You don’t need massive resources to act responsibly.

- Build Lean but Ethical

Startups should focus on fair and clear design from the start. - User Trust Is a Currency

Being open about AI limits boosts trust. Early users like clear and honest talk. - Ethical by Default

Startups that care about accessibility, use diverse data, and listen to feedback often grow better.

Responsible AI in AI Training and Datasets

The old adage “garbage in, garbage out” holds true.

- Inclusive Datasets

Gather data from many places to show real-life situations and people. - Bias Prevention

Algorithms need checks. They should be fair. - Ongoing Evaluation

Responsible AI changes. It needs updates and feedback to get better.

Challenges in Implementing Responsible AI

Even with the best intentions, roadblocks exist:

- High Costs

Testing, audits, and governance need resources. Not all groups can pay for them. - Lack of Standards

There is no agreement on AI ethics worldwide, so it’s hard to agree between industries or countries. - Cultural Differences

Ethical norms differ by country. AI should adapt with care.

Tools and Frameworks Supporting Responsible AI

Understanding responsible AI involves knowing the tools available:

- IBM AI Fairness 360

A toolkit helps find and fix bias in machine learning models. - Google’s What-If Tool

See how inputs change results and find any bias. - Ethical OS and AI Now Framework

Helps groups plan for scenarios, manage rules, and check effects.

The Future of Responsible AI

Trends shaping the next decade include:

- AI Legislation Becoming Normative

We’ll get stricter and clearer rules for Responsible AI in different areas. - AI Literacy Becoming Mainstream

Education helps people ask, learn, and talk about AI. - Global Ethical Alliances

Cross-border teamwork helps set global rules for safe, fair AI use.

Conclusion

Responsible AI means making sure AI helps society, respects human rights, and follows ethical values. It’s not just up to developers; everyone, including businesses, regulators, and users, must be involved.

As AI grows, being responsible isn’t a choice; it’s necessary. Developers need to design thoughtfully, organizations should use AI ethically, and users must stay informed. The future of AI relies on our dedication to responsibility, openness, and focusing on people.

FAQs – What Is Responsible AI

1. What is the definition of Responsible AI?

Responsible AI means making and using AI systems that are fair, open, and moral. It’s about being clear and taking responsibility.

2. Is Responsible AI required by law?

In some areas, yes. The EU’s AI Act sets rules for high-risk AI systems.

3. How do companies practice Responsible AI?

Conduct bias checks, create ethics boards, ensure transparency, and align tools with rules.

4. Can Responsible AI be automated?

Some checks can be automated, but Responsible AI needs people for judgment, especially in ethics and governance.

5. What are some tools that promote Responsible AI?

IBM AI Fairness 360, Google’s What-If Tool, Microsoft’s Responsible AI resources, and open-source governance frameworks.