With AI getting better, it’s important to know if people can tell when text is from AI. We looked at six different studies to find out more.

Can people tell if text is made by AI?

Some think they can, but others trust tools like Bypass Engine.

With the big effects of spotting (or not spotting) AI text, it’s key to know if people can notice AI writing. To look into this, we checked six different studies.

Main Points (Short Version)

- Even if they believe in their skills, people often find it hard to notice AI-made text.

- AI detectors do a much better job than people at finding AI text.

- Not being able to spot AI text can lead to problems like misinformation, cheating in school, and fake content online.

Can People Spot AI-Written Text? 6 Studies Detailed

Even though many feel sure, people generally find it tough to spot AI-written words. Our look into common ChatGPT-related terms showed that pinpointing ChatGPT is trickier than expected.

To dig deeper into this, we’re breaking down six studies by others about people spotting AI-written text.

1. Are teachers able to recognize AI? Looking into how well teachers can spot AI-written texts in student essays.

Study summary

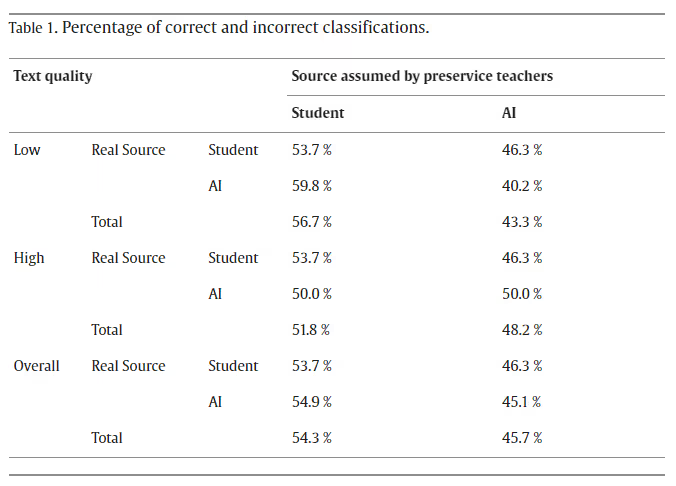

This study, accessible on ScienceDirect, looks at how good teachers are at spotting AI-created content.

With AI getting better, it’s crucial to spot AI-written stuff in education. So, the researchers carried out tests to find out if teachers could tell the difference between AI and human-written text.

The study had two groups of participants:

Study 1 (participants were future teachers)

“Future teachers correctly identified only 45.1% of AI-written texts and 53.7% of student-written ones.”

Study 2 (participants were experienced teachers)

“They recognized only 37.8% of AI-generated texts accurately, but they correctly identified 73.0% of student-written texts.”

Main points:

- Participants struggled to consistently tell apart AI-written content from human-written content.

- Pre-service teachers got only 45.1% of AI texts right.

- Experienced teachers correctly identified just 37.8% of AI texts.

- Even when participants felt sure, they were often wrong, showing they overestimated their ability to spot AI.

Conclusion:

These findings suggest that teachers find it hard to tell AI-generated work from human-written work without help from top AI detection tools.

Study: Can teachers detect AI? Checking how well teachers recognize AI-generated texts in student essays.

2. Not Everything ‘Human’ is Gold: Judging Human Evaluation of AI Text

Study Summary

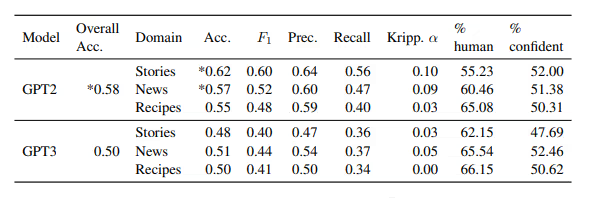

In a study available at Cornell University, researchers looked at how well people can tell if something is written by AI or humans.

They checked out stories, news pieces, and recipes.

For the study, they looked at 255 stories from social media. Out of those, they picked 50 human-written stories. They also created 50 more stories using prompts.

They reviewed 2,111 news articles from 15 news outlets. From these, they selected 50 articles. Then, they generated 50 more articles from prompts.

They included 50 human-written recipes from the RecipeNLG dataset, along with 50 more recipes made from prompts.

Key Findings

The findings showed that people often struggled to spot AI-generated content.

People guessed right 57.9% of the time when comparing GPT2 text to human-written text, which was rounded to 58% in the study’s table.

When it came to GPT3, their accuracy was 49.9%, rounded to 50%.

Even when participants got some training in spotting AI content before the test, their scores only got a bit better, but not by much.

The results indicate that people have difficulty recognizing text created by AI, even when they’ve been trained for it beforehand.

Learn about how accurate AI detectors are, based on a meta-analysis conducted by third-party researchers examining studies on AI detection accuracy.

Research: Not Everything ‘Human’ Shines: Assessing Human Judgment of Generated Text

3. Study: People struggle to tell GPT-4 from a human

Summary of research

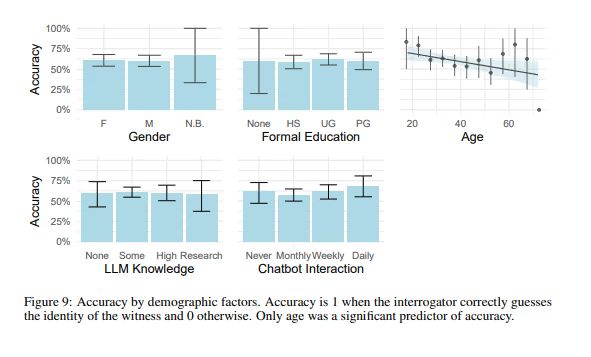

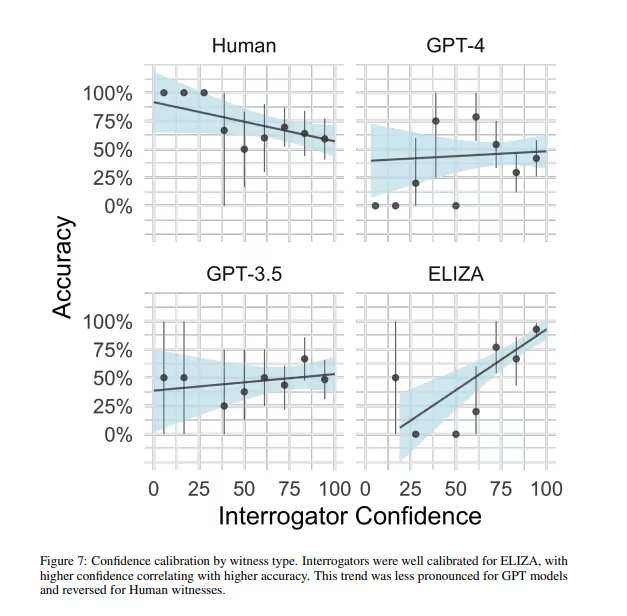

This research, shared by Cornell University, sheds light on how well people can spot text made by AI, including three models: GPT-4, GPT-3.5, and ELIZA.

The study aimed to see if people could recognize AI-written content. It had 500 people participate, using a ‘game’ similar to a chat app to test their skills.

The study also looked at whether knowing about AI or being familiar with large language models (LLMs) changed how well people did.

Key discoveries

- Much like past studies, the research indicates that people mostly couldn’t tell AI-written text apart from human-written text.

- Human text was correctly spotted as human-made 67% of the time.

- GPT-4 was wrongly seen as human 54% of the time.

- ELIZA was mistaken for human-written 22% of the time.

- When it came to knowing about LLMs, this familiarity slightly affected how accurate people were.

- Interestingly, age played the biggest role in accuracy.

- Younger people were generally better at identifying AI models compared to older people.

Summary

Humans often can’t tell if text is AI-made, even if they know about the topic.

Want to know more about spotting AI? Check out the Bypass Engine Content Detector Accuracy Review.

Study: People can’t tell GPT-4 from a person in a Turing test.

4. Can you tell if it’s a bot? Picking out AI-made writing in college essays

Study summary

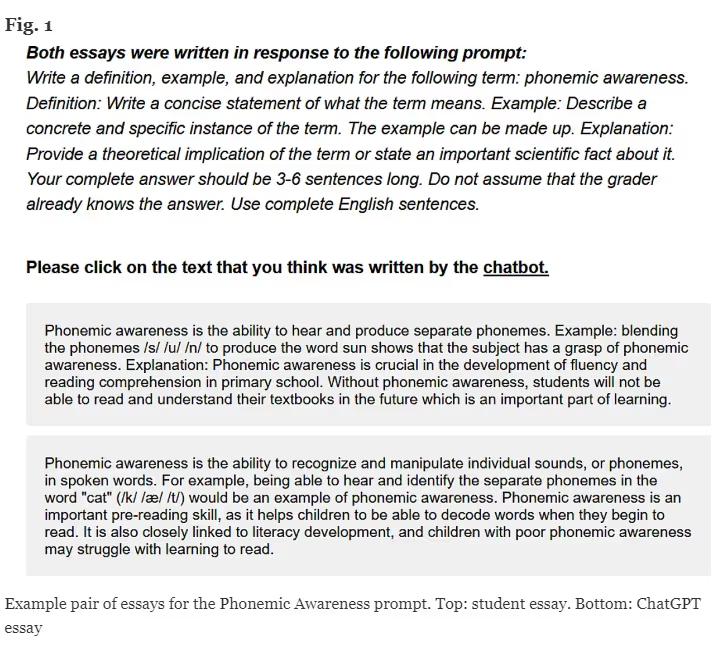

The International Journal for Educational Integrity looked closely at how to spot AI writing in school work. They wanted to know if people could tell apart essays written by AI from those by humans.

The study had 140 college teachers and 145 college students take part. Each person got one essay written by a student and another by AI. They had to decide which essay was created by AI and which one a student wrote.

Key Insights

- Participants had trouble telling apart AI-written essays.

- College teachers got it right 70% of the time with ChatGPT.

- Students nailed it 60% of the time with ChatGPT.

Takeaway

Both teachers and students find it hard to spot AI in schoolwork.

Study: Spot the Bot? Noticing AI in College Essays

5. Reviewers Find It Hard to Spot ChatGPT-Generated Abstracts in Shoulder and Elbow Surgery

Overview

This study, featured in Arthroscopy: The Journal of Arthroscopic & Related Surgery, examines how well reviewers can identify abstracts created by ChatGPT.

In the study, participants were given a mix of abstracts, some made by AI and others by humans. They had to decide which ones they thought were AI-generated.

Key Findings

Reviewers correctly identified AI-generated content 62% of the time. However, 38% of the time, they mistakenly thought human-written abstracts were made by AI. This shows that relying solely on human judgment is not enough, and other AI detection methods are necessary.

Conclusion

This study, like others, shows that people find it challenging to detect AI-generated content.

6. Can ChatGPT Outsmart the System? Comparing AI and Human Personal Statements in Plastic Surgery Residency Applications

Study Summary

This study comes from the Canadian Society of Plastic Surgeons. It looks at the content of medical residency applications, which is a very specialized area.

Two surgeons, who recently retired after 20 years of working with the Canadian Residency Matching Service (CaRMS), took part in this study. They had to review 11 applications written by AI and 11 by humans. Their task was to figure out which ones were created by ChatGPT-4 and which were human-written.

Main Findings

The study found that the evaluators could tell AI and human applications apart 65.9% of the time. The paper pointed out that having only a small number of samples was a drawback, but the evaluators’ expertise was a significant advantage.

Conclusion

The results highlight that spotting AI-generated content is tough for people. Even experts with over 20 years of experience in medical residency applications find it hard to tell apart human and AI-written applications.

Concluding Remarks

Can people spot text made by AI? These studies show they cannot.

People sometimes get it right, but mostly they find it hard to tell AI text from human text. Therefore, a good AI text detector is very helpful for more clarity.

FAQs About AI Detection

Can people spot AI-made content?

Generally, it’s tough for people to recognize when content is AI-created. Research suggests that people’s ability to detect it varies a lot.

How precise are AI detection tools?

The success rate of these tools differs based on which one you use. The Bypass Engine AI Checker claims over 99% accuracy. Plus, the Turbo model also boasts over 99% accuracy in spotting AI-generated content.

For a comparison of popular AI tools, you might want to look at our AI detection review series.

What happens if we can’t identify AI content?

This could lead to big problems, like spreading false info, cheating in school, and losing trust in what we read online.